While the first globally observed use case of blockchain technology only came in 2009 with Bitcoin’s release, the idea of distributed networks has existed as far back as the early 80s.

Elite computer scientists have long been researching creating efficient decentralized systems. As with any innovation, developers continuously hit brick walls, leading to many identified problems in the mainstream.

One of these is the ‘blockchain trilemma,’ a theory stating that public blockchains can only truly offer two of three benefits (security, decentralization, scalability), thus sacrificing or compromising on the other.

One of these elements, scalability, has been a hot discussion topic and has been an uphill battle for developers since Bitcoin’s introduction. So, this article explores the scalability problem in blockchain technology and why it matters that it gets resolved or improved.

The scalability problem

Let’s first explore this issue with a loose business analogy. When an enterprise is said to be scalable, it can reach a much wider audience or grow exponentially without being hampered by its available resources.

A blockchain is scalable when it can support an increasing number of transactions without drastically suffering in performance and production costs. In the early days of Bitcoin, usage in cryptocurrencies was pretty low.

Moreover, blockchains at that time were only handling one type of transaction, and networks were far less busy than presently. Fast forward a couple of years later, and the things that distributed ledger technology is processing nowadays have made many cryptocurrencies severely lack expandability.

For instance, Bitcoin has historically had a block size of 1-2MB, where it processes around 5 transactions a second. Of course, with millions of people using cryptocurrencies, this performance doesn’t live up to the standard of the likes of VISA, which can process about 1700 in the same span.

Numerous factors cause a lack of scalability, but one of the most glaring is the inherent capacity. Many blockchains were designed only to handle a certain quantity of data.

Even when particular modifications are implemented (such as increasing the block size), the nodes in the network may not possess enough computing resources to store a substantial amount of information.

It doesn’t take a genius to understand that a scalability insufficiency causes many user-facing problems, which we’ll explore more of in the next section.

Why scalability matters

The most annoying element of numerous so-called ‘unscaled’ blockchains is the high settlement fees, particularly prevalent with mining networks or blockchains using the proof-of-work (PoW) consensus mechanism to confirm transactions.

Of course, the most popular examples are Bitcoin and Ethereum. Over the last few years, Ethereum has been notoriously known as being expensive because of the platform’s abnormally high gas or transaction fees.

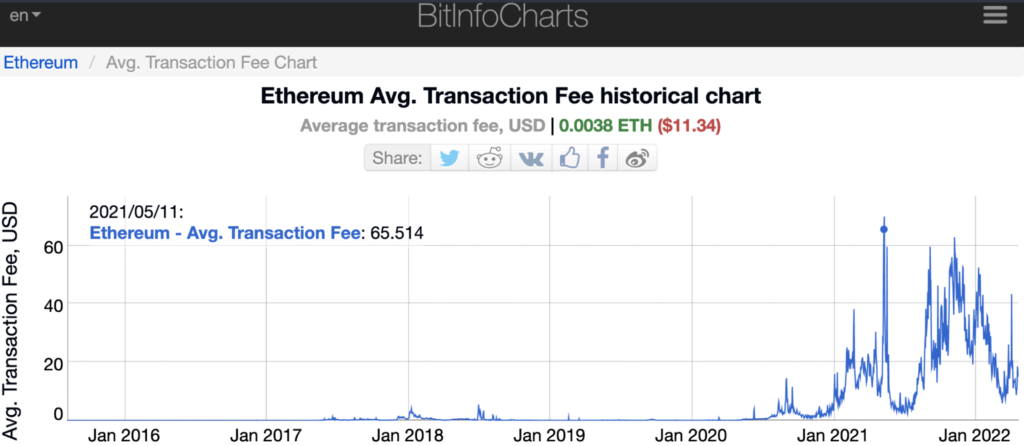

Below is a chart from BitInfoCharts tracing Ethereum’s gas costs since inception. Presently, we see the average transaction fee at $11.34, which is too over-priced; at some point, this peaked at around $65 in May 2021.

If payment costs are not reasonable, this discourages users from using some blockchains, which ultimately derails adoption across a wide range of sectors. Moreover, networks that are not competitively scalable result in slower transaction speeds, making the entire process time-consuming and burdensome.

As mentioned previously, blockchains are much more versatile and handle a range of complex tasks with an increasing user base. An absence of expandability results in distributed ledgers being limited in usability and accessibility, making them uncompetitive to legacy centralized platforms.

The current scaling solutions

A quote by Austrian author Gerhard Gschwandtner says, “Problems are nothing but wake-up calls for creativity.” Scalability challenges have led developers down a rabbit hole of sorts looking for sustainable solutions.

Here, we’ll explore the primary practices used to fix the problem or substantially improve it.

Proof-of-stake (PoS)

After PoW, proof-of-stake is the second-most utilized consensus mechanism and significantly improves scalability, but how? In mining-based networks, each transaction is confirmed by a computer block by block.

The main problem is the blocks themselves are generally limited to a specific capacity. PoS gets rid of miners by autonomously confirming transactions without the need for computational mining.

This system requires users to ‘lock’ or stake their coins in the network to become validators and earn rewards; the more you stake, the more chances you have of being chosen for each settlement and the higher your profits.

The automatic confirmation is the key ingredient to proof-of-stake achieving tremendous scalability levels far surpassing its proof-of-work counterparts. Prominent examples of blockchains using this method are Polkadot, Cosmos, Solana, Cardano, BNB Beacon Chain, Avalanche, and plenty others.

Sharding

Ethereum 2.0, Ethereum’s highly-anticipated upgrade, is planned to include sharding (along with proof of stake). This technique is still somewhat experimental and is less common than proof-of-stake. Examples of projects utilizing this mechanism presently are Zilliqa, Qtum, and Tezos.

Sharding is a process in database structures where a network is split into smaller partitions (called shards) to essentially ‘spread the load.’ So, by dividing a blockchain into sections, you can process numerous transactions simultaneously as you are not putting too much pressure on one part of it.

Ultimately, sharding tremendously reduces latency and slowness, which then boosts scalability.

Layer-2 networks

The solutions we have spoken about are used in what we term layer-1 networks. A layer-2 is a secondary blockchain operating or built atop another blockchain.

Ethereum is one project with several layer-2 protocols, most notably Polygon, but also others like Cartesi, Arbitrum, ParaState, and Optimism. Layer-2 networks come in various forms like nested blockchains, state channels, and sidechains.

While all these mechanisms are distinctive, the aim is to run something adjacent to the original distributed ledger. The workload is split between layers 1 and 2 to optimize efficiency overall.

Curtain thoughts

By now, you should appreciate how scalability is an issue in blockchain technology and why it’s essential. While distributed ledgers have existed for over a decade, they are far from perfect, leading to the trilemma we mentioned earlier.

Although security and decentralization are individual priorities (with many concerns of them raised with virtually all networks), scalability is crucial mainly for greater adoption amongst every crypto user.